AIPRM’s Ultimate Generative AI Glossary

Welcome to AIPRM’s definitive online glossary for generative AI.

From students to professionals, this resource is designed to empower every reader with a solid and clear understanding of the critical concepts that drive generative AI.

From exploring foundational terms such as neural networks and deep learning to understanding the nuances of GANs, this glossary has been curated to offer a structured and comprehensive pathway to becoming proficient in the language of generative AI.

Use this easy-to-understand glossary to confidently navigate the dynamic world of generative AI. Think of each term as a tool, helping you build a stronger understanding and fostering meaningful discussions in this fast-paced field. Discover and take command with the unmatched guidance provided by this resource, ready at your fingertips.

Using AIPRM is easy, even without the tech background. But get to know these terms to develop a better understanding of the tech behind these tools. Install AIPRM and power up ChatGPT.

3D Autoencoders #

A specialized form of two-part neural network that includes an “encoder” and a “decoder.” The encoder transforms the initial data into a smaller depiction. The decoder tries to reconstitute the original data from the depiction, restoring it to its original state.

3D GAN #

A distinctive architectural framework within the Generative Adversarial Network (GAN) paradigm, specialized for the generation of three-dimensional shapes.

Activation Function #

A crucial element within artificial neural networks, responsible for modifying input signals. This function adjusts the output magnitude based on the input magnitude: Inputs over a predetermined threshold result in larger outputs. It acts like a gate that selectively permits values over a certain point.

Active Learning #

A form of reinforcement learning from human feedback where an algorithm actively engages with a user to obtain labels for data. It refines its performance by getting labels for desired outputs.

Actor-Critic Model #

A two-part algorithmic structure employed in reinforcement learning. Within this model, the “Actor” determines optimal actions based on the state of its environment. At the same time, the “Critic” evaluates the quality of state-action pairs, improving them over time.

Advantage Actor Critic (A2C) #

An advanced fusion of policy gradient and learned value function within reinforcement learning. This hybrid algorithm is characterized by two interdependent components the “Actor,” which learns a parameterized policy, and the “Critic,” which assimilates a value function for the evaluation of state-action pairs. These components collectively contribute to a refined learning process.

Adversarial Attack #

An attempt to damage a machine learning model by giving it misleading or deceptive data during its training phase, or later exposing it to maliciously-engineered data, with the intent to induce degrade or manipulate the model’s output.

Adversarial Examples #

The building blocks of an adversarial attack: Inputs deliberately constructed to provoke errors in machine learning models. These are typically deviations from valid inputs included in the data set that involve subtle alterations that an attacker introduces to exploit vulnerabilities in the model.

Agent #

Any software program or autonomous entity (such as a machine learning model) capable of acting or making decisions in pursuit of specific goals.

Agent-Based Modeling #

A method employed for simulating intricate systems, focusing on interactions between individual agents to glean insights into emergent system behaviors.

AI (Artificial Intelligence) #

A field encompassing the theory and crafting of computer systems with the capacity to execute tasks traditionally necessitating human intelligence like perception, speech comprehension, decision-making, and language translation. This can also refer to an individual machine learning model.

AI Music #

A musical composition made by or with AI-based audio generation.

AI Policy and Regulation #

The formulation of public sector frameworks and legal measures aimed at steering and overseeing artificial intelligence technologies. This facet of regulation extends into the broader realm of algorithmic governance.

AI Writer #

A software application that uses artificial intelligence to produce written content, mimicking human-like text generation. AI writing tools can be invaluable for businesses engaged in content marketing.

AI Writing #

Text written by, or with the assistance of, an AI writer.

ALBERT #

A cloud-centric artificial intelligence platform that helps integrate and manage an existing digital marketing tech stack.

AlphaGo Alpha Zero #

A specialized computer program devised by Google DeepMind to play the intricate Chinese strategy game Go. It showcases the potential of narrow AI by engaging in strategic gameplay akin to chess but with a far broader range of possible outcomes.

Artificial Neural Network (ANN) #

A key element of machine learning that serves as the cornerstone of deep learning. It uses an intricate network structure that mirrors the neural connections of the human brain.

Asynchronous Advantage Actor Critic (A3C) #

A robust reinforcement learning algorithm in which a policy and value function coexist. Operating within the forward trajectory, A3C leverages multi-step returns for policy and value-function updates, exemplifying sophisticated learning techniques.

Attention Map #

A visual representation accentuating sections of an image pertinent to a specific target class. This offers interpretable insights into the inner workings of deep neural networks.

Attention Mechanisms #

An aspect of machine learning models enabling them to prioritize certain data segments during predictions. This mimics human cognitive focus by assigning different weights to distinct data elements, allowing the model to pay more attention to those elements.

AUC-ROC (Area Under the Receiver Operating Characteristic Curve) #

A quantitative evaluation metric for classification tasks, charting the performance curve of various threshold settings. The Area Under the Curve (AUC) signifies the degree of separability within the classification model.

Audio Generation #

The process of generating raw audio content such as speech or AI music by using artificial intelligence.

Autoencoder #

A specialized variant of artificial neural networks employed for unsupervised learning. Autoencoders master the dual functions of data encoding and decoding, facilitating efficient data representation and reconstruction.

Backdoor Attack #

A strategy employed to maliciously access computer systems or encrypted data by using covert pathways to evade conventional security measures.

Backpropagation #

Short for “backward propagation of errors,” this is an algorithmic bedrock of supervised learning for artificial neural networks. Backpropagation computes weight adjustments based on the gradient of an error function to help refine a model.

Batch Size #

The number of samples processed within a single iteration of model training. Batch size governs the pace of model updates and optimization.

Bayesian Optimization #

A methodology using probabilistic modeling to guide hyperparameter selection, enhancing the efficiency of optimization procedures in machine learning.

Bellman Equation #

A pivotal dynamic programming equation integral to discrete-time optimization problems, facilitating optimal decision-making within sequential contexts.

Bidirectional Encoder Representations from Transformers (BERT) #

An influential deep learning approach employed in natural language processing, enabling artificial intelligence programs to unravel contextual nuances in ambiguous text.

Black Box #

A system or model whose internal mechanisms or workings are not transparently understandable from its inputs and outputs. The inner processes are concealed, making it challenging to discern how the system arrives at specific decisions or predictions. While black box models such as deep neural networks can achieve high performance, their lack of interpretability can hinder understanding and trust.

BLEU Score #

A metric for automatic evaluation of machine-translated text. The BLEU score gauges the similarity between machine-generated text and a set of high-quality reference translations, yielding a value between zero and one.

Capsule Network #

A form of artificial neural networks that model intricate hierarchical relationships. Drawing inspiration from biological neural organization, they aim to emulate more closely the structure of human neural connections.

Catastrophic Forgetting #

A problem that occurs when two similar game states yield dramatically divergent outcomes, causing confusion in the Q-function‘s learning process.

Chatbot #

An interactive software application that imitates human conversation via text or voice interactions, frequently found in online environments.

Class #

A distinct category or group that objects, entities, or data points can be classified into based on shared characteristics or features. They are used in various machine learning and pattern recognition tasks such as image classification or text categorization in which algorithms learn to differentiate and sort input data.

Computational Creativity #

A multidisciplinary domain that aims to replicate human creativity through computational methods by combining approaches from fields including artificial intelligence, philosophy, cognitive psychology, and the arts.

Computer Vision #

A subfield of artificial intelligence (AI) and computer science that focuses on enabling computers to interpret, understand, and process visual information from the world. It has a broad range of applications including object detection, image classification, facial recognition, and autonomous vehicles.

Confusion Matrix #

A pivotal tool for evaluating model performance by identifying misclassified objects, providing insights into model accuracy.

Contextual Bandit #

An extension of the multi-armed bandit approach that considers contextual information to optimize decision-making in multi-action scenarios.

Continual Learning #

An approach that enables machine learning models to accumulate knowledge from a sequence of unrelated tasks, preserving previously acquired insights and applying them to new challenges. Also known as incremental learning or lifelong learning.

Convergence #

Convergence in machine learning refers to the state during training where a model’s loss stabilizes within a certain error range around its final value. It signifies that further training will not significantly enhance the model’s performance.

Convolutional Neural Network (CNN) #

A type of neural network that employs a type of mathematical operation called convolution to discern patterns and recognize objects within imagery. Its applications range from image recognition to feature extraction.

Corpus #

The full set of data utilized to train an artificial intelligence model. It reviews this data to learn about a specific domain.

Cross-Validation #

A robust technique for assessing machine learning models by training them on only a subset of the data and evaluating them on a different subset.

Curriculum Learning #

An approach in machine learning that mimics classical human education by introducing progressively more complex aspects of a problem. This enhances a model’s learning trajectory by ensuring it remains optimally challenged.

CycleGAN #

An image-to-image translation methodology using unpaired datasets to learn mappings between the input and output images.

Data Annotation #

The process of labeling and annotating data to facilitate supervised learning, enhancing a model’s understanding of the inputs.

Data Augmentation #

A technique where users artificially enrich the training set by adding modified copies of the same data.

Data Imbalance #

An uneven distribution of classes within a dataset. This can challenge model performance and accuracy.

Data Leakage #

A phenomenon where external information inadvertently influences model training, compromising its integrity.

Data Poisoning #

A type of adversarial attack involving the manipulation of training data by deliberately introducing contaminated samples to skew model behavior and outputs.

Deep Deterministic Policy Gradient (DDPG) #

A reinforcement learning algorithm employing deep neural networks to learn optimal policies in continuous action spaces to maximize the expected long-term reward.

Deep Learning (DL) #

A type of machine learning that relates to intricate neural network architectures capable of hierarchical feature extraction.

Deep Q-Learning #

A pivotal approach in reinforcement learning that employs deep neural networks to approximate the Q-function, which it uses to determine the optimal course of action.

Deep Q-Network (DQN) #

A framework employing deep neural networks for Q-learning in reinforcement learning tasks.

Deepfake #

Synthetic media (typically images or videos) produced through digital manipulation by convincingly replacing one individual’s likeness with another’s.

Depth Estimation #

The task of predicting the depth of objects within an image. This is essential for various computer vision applications like self-driving vehicles.

Differentiable Neural Computers #

Advanced and typically recurrent neural network architectures enhanced with memory modules for complex learning and reasoning tasks.

Differentiable Rendering #

A process enabling gradients of 3D objects to be calculated and propagated through 2D images.

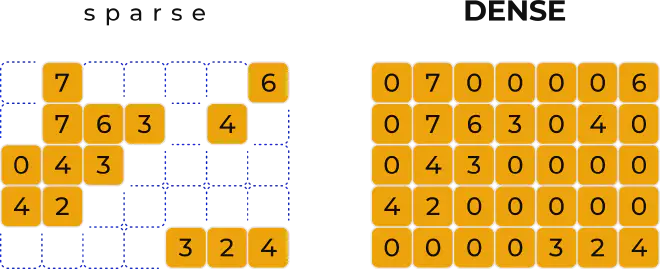

Dimensionality Reduction #

A technique for simplifying datasets by reducing their feature dimensions while preserving critical information.

Discriminative Models #

Models designed for supervised machine learning that focus on learning class boundaries and decision boundaries.

Double DQN #

A DQN technique that uses double Q-learning to mitigate overestimation biases and improve Q-value approximations.

Dropout #

A regularization technique involving the temporary exclusion of randomly selected nodes during neural network training.

Dueling DQN #

A reinforcement learning algorithm incorporating both value and advantage functions for improved Q-value estimation.

Dynamic Time Warping (DTW) #

A method for measuring similarity between time series data, commonly used in time series analysis and pattern recognition.

Edge Learning #

A decentralized approach to machine learning where processing occurs on user devices, enhancing privacy and efficiency. This required model compression because of the complexity of AI programs.

Embeddings from Language Models (ELMo) #

A word embedding technique that generates context-aware word representations by considering character-level tokens.

Epoch #

An iteration in the training process where the entire dataset is presented to a machine learning model.

FastText #

A word embedding technique that represents words as bags of character N-grams, facilitating efficient language processing.

Feature Extraction #

The process of distilling relevant information from raw data to create meaningful features for machine learning.

Feature Selection #

The task of identifying and retaining the most crucial features while discarding less relevant ones in a dataset to use only relevant data and ignore noise.

Federated Learning #

A collaborative machine learning paradigm where models are trained across distributed devices while preserving data privacy.

Few-Shot Learning #

A learning paradigm where models are trained on limited examples and expected to generalize their skills to unfamiliar but related tasks.

Fine-Tuning #

The process of adapting a pre-trained model to a new task by adjusting its parameters using task-specific data.

Fitting #

The process of adjusting the parameters of a model to best match observed data. Fitting involves minimizing the difference between the model’s predictions and the actual data points, typically achieved through optimization techniques like gradient descent. This process enables the model to generalize and make accurate predictions on new, unseen data.

Fitted Q Iteration (FQI) #

An algorithm in reinforcement learning used to approximate the Q-function and solve optimal control problems.

Gated Recurrent Units (GRU) #

A gating mechanism in recurrent neural networks designed to address the vanishing gradient problem, enhancing learning in sequences.

Generative Adversarial Network (GAN) #

A machine learning framework that enables the generation of realistic data by pitting a generative model against a discriminative model.

Generative 3D Modeling #

A technique for representing three-dimensional shapes as a series of processing steps using generative algorithms, simplifying design and fabrication processes.

Generative Design #

A design approach driven by AI, where algorithms generate multiple design options based on defined constraints and objectives.

Generative Model #

A machine learning model designed to generate data instances, contributing to a wide range of applications.

Generative Modeling #

A branch of machine learning focused on using AI to model and predict outcomes of hypothetical phenomena (such as how a car might crumple during a crash).

Generative Pretrained Transformer (GPT) #

A language model incorporating transformers for a diverse range of text generation and natural language processing tasks.

Genetic Algorithm #

A heuristic optimization technique inspired by natural selection and genetic processes to solve complex optimization problems.

Global AI Strategies #

Coordinated AI policies and regulations between governments aimed at fostering advancements and collaborations in artificial intelligence research and development.

Global Vectors for Word Representation (GLoVe) #

A word embedding technique capturing semantic relationships between words based on global co-occurrence statistics.

GPT (Gizmo) #

GPTs are a combination of a Prompt Template, Documents and API instructions as feature inside ChatGPT. GPTs are base setups, like characters. AIPRM manages task specific prompts for each GPT.

Gradient #

The rate of change of a function concerning its input variables, indicating the direction and magnitude of the change. This is useful in optimization algorithms like gradient descent to iteratively adjust model parameters and minimize errors.

Gradient Descent #

A fundamental optimization algorithm using gradients to minimize errors in machine learning models by iteratively adjusting parameters.

Graph-Based Model #

A machine learning model that uses graph structures to represent and analyze relationships between data points.

Grid Search #

A hyperparameter tuning technique involving systematic exploration of a predefined hyperparameter space to optimize model performance.

Hierarchical RL #

A reinforcement learning paradigm focused on breaking down large processes into simple subtasks to improve decision-making across multiple levels of abstraction or hierarchy.

Hindsight Experience Replay #

A technique in reinforcement learning where failed experiences are replayed with alternate goals to improve learning efficiency.

Human-AI Collaboration #

Cooperation between humans and artificial intelligence to collectively accomplish tasks by leveraging their respective strengths.

Human-Centered Machine Learning #

An approach to machine learning that emphasizes human needs, ethics, and user experience in model development.

Human-in-the-Loop AI #

A paradigm where human input is integrated into AI processes, enhancing model performance and accountability.

Hyperparameter Tuning #

The process of optimizing hyperparameters to enhance machine learning model performance and generalization.

Hyperparameter #

A configurable setting in machine learning models that influences training behavior and performance.

Image Segmentation #

A computer vision task involving the division of an image into distinct segments to enable object detection and localization.

Image Synthesis #

The process of generating new images from existing ones using machine learning models, vital for creative applications.

Image-to-Image Translation #

An advanced computer vision technique that transforms images from one domain into another to learn the mapping between input and output images.

Image-to-Text Generation #

A challenging natural language processing task in which a model generates text descriptions of input images.

Imitation Learning #

An approach in machine learning where models learn by imitating human behavior, often used for training autonomous systems.

Instance #

A single data point in a dataset, characterized by a set of features and, in supervised learning, associated with a label.

Instance Segmentation #

A computer vision task that involves identifying and categorizing individual objects within an image, crucial for object detection.

Interpretability #

The study of explaining and understanding the decision-making processes of machine learning models, and of designing systems whose decisions can be easily understood. Also known as explainability.

Intrinsic Curiosity Module (ICM) #

A type of intrinsic motivation in reinforcement learning where agents are driven by internal curiosity signals to explore their environment.

Intrinsic Motivation #

A mechanism in reinforcement learning where models are driven to exhibit inherently rewarding behaviors like exploration and curiosity. Modeled after the psychological concept of the same name.

Job Market #

Our AI Statistics report provides comprehensive information about the development of job markets due to AI.

Inverse Reinforcement Learning #

A technique where agents learn an underlying reward function from observed human behavior.

Knowledge Distillation #

A technique where a complex model transfers its knowledge to a smaller, simpler model, enhancing efficiency without losing validity.

Labeling #

The process of annotating data with class labels, enabling supervised machine learning.

Language Model #

A machine learning model trained to understand and generate human language, often used in natural language processing tasks.

Large Language Models (LLMs) #

A type of language model characterized by its extensive capacity to understand and generate human language.

Learning Rate #

A hyperparameter that determines the step size during model training in gradient-based optimization algorithms.

Lemmatization #

A text normalization technique in natural language processing that transforms words into their base or root form, enhancing language processing efficiency.

Lifelong Learning #

An approach where machine learning models continually learn from new data and adapt to evolving challenges. Also known as continual learning.

Local Interpretable Model-Agnostic Explanations (LIME) #

A technique in interpretability that uses a local, interpretable model to provide human-understandable explanations for the predictions of a black box model.

Long Short-Term Memory (LSTM) #

A type of recurrent neural network architecture designed to address the vanishing gradient problem, vital for sequential data processing.

Loss Function #

A mathematical function used to measure the difference between predicted and actual values in machine learning models.

Machine Learning (ML) #

An advanced discipline of artificial intelligence where algorithms learn from data to make informed decisions or predictions.

Machine Learning Algorithms #

Mathematical models and techniques employed to discern and understand inherent patterns within datasets, aiding in predictions, decisions, and insights.

Machine Learning Operations (MLOps) #

The practice of streamlining the deployment, management, and monitoring of machine learning models in real-world applications.

Machine Translation #

Translating text from one language to another using machine learning models.

Markov Decision Process (MDP) #

A mathematical framework used to model decision-making in scenarios with sequential actions and uncertain outcomes.

Memory Networks #

An AI model that combines reasoning abilities with a long-term memory component, learning to effectively utilize both. The long-term memory is read from and written to, aiming to enhance predictive capabilities. These networks are particularly explored in question-answering contexts, utilizing long-term memory as a dynamic knowledge base to generate relevant textual responses.

Meta Reinforcement Learning #

A higher-level reinforcement learning paradigm where agents learn to adapt and generalize their skills across different tasks.

Meta-Learning #

An approach where machine learning models learn to learn, acquiring knowledge that helps them rapidly adapt to new tasks. Meta-learning involves utilizing machine learning algorithms to effectively integrate predictions from other machine learning models.

Model Compression #

The process of optimizing deep learning models for deployment on resource-constrained devices without compromising performance, allowing for edge learning.

Model Deployment #

The operationalization of machine learning models, making them accessible for real-world applications and interactions.

Model-Free RL #

An algorithm in reinforcement learning that doesn’t explicitly model the environment’s dynamics or transition probabilities.

Model Quantization #

A model compression technique that reduces model memory and computational requirements by representing parameters with fewer bits.

Model Robustness #

The ability of a machine learning model to perform consistently across different input data and scenarios.

Model Validation #

The process of assessing a machine learning model’s performance and generalization capabilities on unseen data.

Model-Based RL #

A reinforcement learning approach where agents learn a model of the environment to make decisions and plan actions.

Model-Free RL (Reinforcement Learning) #

A method in reinforcement learning that doesn’t rely on the transition probability distribution or reward function of the problem’s decision process, collectively known as the “model.” It instead operates on trial-and-error, exploring various solutions to optimize and achieve the most favorable outcome.

Momentum #

A technique in gradient-based optimization algorithms that accelerates convergence by incorporating past gradients.

Monte Carlo Method #

A stochastic algorithm that estimates mathematical values by generating random samples or simulations.

Monte Carlo Tree Search (MCTS) #

A decision-making algorithm often used in game-playing AI. It excels in managing intricate and strategic video games with vast search spaces, a challenge where conventional algorithms might falter due to the overwhelming number of possible actions.

Motion Prediction #

The task of forecasting future trajectories or actions of objects, often used in autonomous systems.

Multi-Agent RL #

A subfield of reinforcement learning where multiple agents interact and learn in a shared environment.

Multi-Armed Bandit #

A classic optimization problem where an agent chooses between multiple actions (“arms”) to maximize cumulative rewards.

Multi-Dimensional Scaling (MDS) #

A technique for visualizing high-dimensional data by projecting it onto a lower-dimensional space while preserving data similarity.

Multi-Head Attention #

An attention mechanism that allows a model to focus on different aspects of input data simultaneously.

Multi-Instance Learning #

A machine learning paradigm where each example in a dataset comprises multiple instances, enabling learning from groups of data.

Multi-Label Classification #

A classification task where each data instance can be assigned multiple class labels.

Multi-Objective RL #

A reinforcement learning scenario where agents optimize multiple objectives simultaneously, often leading to trade-offs.

Multilingual Models #

Machine learning models capable of understanding and generating text in multiple languages.

Multimodal Learning #

A training approach that leverages data from multiple modalities (e.g., text, image, audio) to improve model performance.

Multitask Learning #

An approach where a single machine learning model is trained to perform multiple related tasks simultaneously.

Music Generation #

The process of creating new AI music compositions using machine learning models.

N-Gram #

A contiguous sequence of n items (usually words) within a block of text, often used in language modeling and text analysis.

Named Entity Recognition (NER) #

A natural language processing task involving the identification and categorization of named entities in text.

Narrow AI #

An AI model that can perform one specific task, and can’t generalize its experience to other tasks.

Natural Language Generation (NLG) #

The process of generating human-like text from structured data or other information sources.

Natural Language Processing (NLP) #

A subfield of artificial intelligence (AI) that focuses on enabling computers to interact with, process, and generate text or speech as humans do. It involves algorithms and models that help it understand, interpret, and generate human language in a way that is both meaningful and contextually relevant.

Natural Language Understanding (NLU) #

The task of enabling machines to comprehend and interpret human language, which is essential for various applications.

Neural Architecture Search (NAS) #

An automated technique that explores and discovers optimal neural network architectures for specific tasks.

Neural Network #

A type of artificial intelligence model inspired by the human brain that is used to analyze and interpret complex data patterns.

Neural Network Pruning #

The process of reducing the size of a neural network by removing unnecessary connections.

Neural Radiance Fields (NeRF) #

A technique for generating detailed 3D reconstructions of objects and scenes from 2D images.

Neural Rendering #

A computer graphics technique that uses neural networks to generate realistic images based on existing scenes by simulating light transport.

Neural Style Transfer #

A transformative technique that combines the content of one image with the artistic style of another, yielding unique visual effects.

Neural Turing Machine (NTM) #

A neural network controller with differentiable interactions with external memory that it interacts with via attention mechanisms, allowing optimization through gradient descent.

Neuromorphic Computing #

Computer architecture that mimics the human brain’s structure using electronic circuits, aiding tasks like pattern recognition and cognitive processes.

Neurosymbolic AI #

A model that fuses statistical AI and symbolic reasoning, aiming to achieve general AI capabilities by combining data-driven and logic-based approaches.

Object Detection #

A computer vision technique that uses instance segmentation to identify objects in images or videos, crucial for applications like autonomous driving and surveillance.

Off-Policy Learning #

A reinforcement learning strategy updating actions based on past experiences, allowing learning from previously collected data.

On-Policy Learning #

A reinforcement learning method improving actions by evaluating and refining the same policy during interactions with the environment.

One-Hot Encoding #

A transformation of categorical data into binary vectors, a common technique for representing categorical variables in machine learning.

One-Shot Learning #

The practice of training models with limited examples to recognize new objects or concepts. This is valuable when available data is limited.

Open-Source AI #

The practice of openly sharing AI project source code for collaborative development, enabling community contribution and innovation.

OpenAI Codex #

An AI system that translates human language into code, streamlining programming tasks by generating code snippets.

OpenAI DALL-E #

A deep learning model that generates images from textual descriptions, showcasing the potential of AI in content creation.

OpenAI GPT-3 #

A language model that generates human-like text, applicable in chatbots, content creation, and more.

OpenAI GPT-4 #

The successor to GPT-3, advancing natural language understanding and generation capabilities.

OpenAI’s CLIP #

A model unifying vision and language understanding to associate images with textual descriptions.

Optical Flow #

A computer vision technique estimating object movement and velocity in images or videos, useful in tracking and motion analysis.

Option-Critic Architecture #

A reinforcement learning framework incorporating “options” to enhance agents‘ decision-making flexibility.

Out of Vocabulary (OOV) #

A term absent from a language model‘s training data, posing challenges in understanding new language.

Out-of-Distribution Detection #

The task of identifying data instances in machine learning that deviate significantly from the trained model’s input distribution. This is useful to defend against adversarial attacks.

Overfitting #

When a machine learning model performs well on training data but poorly on new, unseen data due to excessive fitting.

Panoptic Segmentation #

An advanced computer vision task combining instance and semantic segmentation, enabling the model to comprehend an entire scene.

Part of Speech Tagging #

Labeling words in text with their grammatical roles (e.g., noun, verb). This is important for language-understanding tasks.

Partially Observable Markov Decision Process (POMDP) #

A generalization of the Markov decision process (MDP) designed to model decision-making in uncertain environments where agents lack complete information.

Perplexity #

A language model‘s measure of how well it predicts text, indicating its understanding of context. The lower the perplexity, the less likely the model is to be “surprised” by new text.

Principal Component Analysis (PCA) #

A dimensionality reduction technique that facilitates visualization and analysis of complex datasets.

Pix2Pix #

A generative adversarial network (GAN) that converts images from one format to another, valuable for style transfer and image enhancement.

Point Cloud-Based Model #

A model that uses a large collection of small data points to process and represent 3D information, important in various fields like robotics and computer graphics.

Policy #

A set of rules, strategies, or instructions that guide decision-making within an autonomous agent or system. It outlines the agent’s course of action based on its current state and the surrounding environment. Policies can be learned through reinforcement learning.

Policy Gradient #

A group of reinforcement learning techniques that optimize policies through gradient descent.

Pose Estimation #

A computer vision task estimating positions and orientations of objects or human body parts in images or videos.

Precision, Recall, and F1 Score #

Metrics evaluating classification model performance based on true positives, false positives, and false negatives.

Prioritized Experience Replay #

A reinforcement learning technique that replays experiences with higher learning importance to improve training efficiency.

Privacy in AI #

The practice of following considerations regarding safeguarding user data and maintaining privacy while utilizing AI technologies.

Procedural Generation #

A type of algorithmic content creation, particularly prominent in video game development, that generates new gameplay elements like character designs, animations, and environments during gameplay.

ProGAN #

A generative adversarial network that uses progressive growth to produce high-resolution images.

Progressive Growth of GANs #

A training technique that increases GAN image resolution gradually, enhancing image quality and diversity.

Prompt Templates #

Prompt templates are pre-defined recipes for generating prompts for a language models. AIPRM manages prompt templates of many types, augments them this user-defined variables and content from custom indexes (RAG).

Proximal Policy Optimization (PPO) #

A policy gradient method for reinforcement learning that optimizes policies gradually to ensure stable learning.

Q-Function #

A function that uses the Q-value to estimate future rewards for taking actions in given states, central to reinforcement learning.

Q-Learning #

A model-free reinforcement learning method using the Q-function to find optimal action choices through trial and error.

Q-Value #

A key value in reinforcement learning that quantifies the expected cumulative reward that will result from taking a specific action in a given state. It reflects the model’s learned knowledge about the potential outcomes and benefits of various actions. It helps the model make optimal decisions by guiding its choice of actions to maximize long-term rewards.

Quantum Machine Learning #

Integration of quantum computing techniques into machine learning for enhanced computational power.

Question-Answering Systems #

An AI system designed to comprehend and respond to questions posed in natural language.

RAG (Retrieval-Augmented Generation) #

RAG, or Retrieval-Augmented Generation, is an advanced AI framework that enhances large language models by pulling in current and precise information from external databases. This process ensures that the responses provided by these models are not only accurate but also up-to-date. Additionally, it offers users a clearer understanding of how these models generate their answers.

Rainbow DQN #

The integration of multiple reinforcement learning techniques to improve deep Q-network performance.

Random Search #

A hyperparameter tuning strategy involving random parameter selection to find optimal configurations.

Rectified Linear Unit (ReLU) #

Activation function commonly used in neural networks, introducing non-linearity.

Recurrent Neural Network (RNN) #

A type of neural network architecture designed for processing sequential data, enabling applications like language modeling.

Regularization #

Techniques preventing model overfitting by adding constraints to the learning process.

Reinforcement Learning #

A learning method where agents improve actions based on interactions with an environment to maximize rewards.

Reinforcement Learning from Human Feedback #

The practice of enhancing reinforcement learning by incorporating guidance from human feedback.

Reinforcement Learning in the Real World #

The application of reinforcement learning to real-world scenarios such as robotics and autonomous systems.

Reinforcement Learning with Function Approximation #

The process of using neural networks to approximate value functions in reinforcement learning for enhanced decision-making.

Responsible AI #

Development and deployment of AI systems while considering ethical, societal, and transparency aspects.

Restricted Boltzmann Machines (RBMs) #

Unsupervised neural networks used for feature extraction and data representation.

Reward Shaping #

Adapting reward functions in reinforcement learning to guide agents toward desired behaviors.

RL Simulation Environments #

Simulated settings used to train and test reinforcement learning agents, allowing safe and efficient learning.

Robustly Optimized BERT-Pretraining Approach (RoBERTa) #

An advanced pretraining technique for natural language processing, extending the capabilities of BERT.

Robustness in AI #

The ability of AI models to perform consistently across various conditions and inputs.

Saliency Maps #

Visualizations that highlight influential regions in input data, helping to interpret model decisions.

Scene Understanding #

An AI‘s capacity to comprehend and interpret visual scenes, crucial for computer vision applications like autonomous driving.

Self-Attention #

An attention mechanism enabling neural networks to weigh the importance of different positions within a sequence to capture relationships and dependencies.

Self-Play in RL #

A reinforcement learning technique where agents improve through self-generated challenges by competing against previous versions.

Self-Supervised Learning #

A learning approach where AI systems generate their own training labels from available data.

Semantic Segmentation #

A computer vision technique categorizing individual pixels in images, valuable for detailed scene analysis.

Semi-Supervised Learning #

A learning paradigm that combines labeled and unlabeled data to enhance model training.

Sentiment Analysis #

A model’s ability to assess sentiment expressed in text, often used in social media analysis.

Seq2Seq Models (Sequence-to-Sequence) #

Neural architectures that translate sequences from one domain to another, which are central to machine translation.

Sequence Generation #

AI’s capability to create sequential data, prominently used in language generation tasks.

Sequence Modeling #

The application of neural networks to model sequential data.

Sequential Data #

A type of information where the order and arrangement of elements hold significance, such as text or an event series.

Sgapley Additive Explanations (SHAP) #

Method for explaining machine learning model outputs, attributing predictions to input features.

Sigmoid Function #

A mathematical function used in artificial neural networks to introduce non-linearity into the model. It maps input values to a range between 0 and 1 and smoothly transitions as inputs vary, resulting in an S-shaped (or sigmoid) curve. It’s particularly useful for binary classification tasks with outputs in the form of a probability score.

Soft Actor-Critic (SAC) #

A reinforcement learning algorithm optimizing policies for maximum reward while also introducing entropy. It attempts to succeed at the task while acting as randomly as possible.

Softmax Function #

A mathematical function that transforms raw scores into probability distributions, resulting in probabilities for each possible outcome.

Spatiotemporal Data Analysis #

Examination of data with both spatial and temporal dimensions, crucial for understanding dynamic processes.

Spatiotemporal Sequence Forecasting #

The process of collecting data across both space and time and using it to predict future developments over time, used in fields like climate modeling.

Speech-to-Text Conversion #

The conversion of spoken language into written text, essential for transcription and voice assistants.

Stemming #

The process of reducing words to their base form to simplify analysis, commonly applied in natural language processing.

Stochastic Gradient Descent (SGD) #

A variant of gradient descent that randomly samples a subset (or “mini-batch”) of the data in each iteration and uses only that subset to reduce error. This can lead to faster convergence and reduced computational requirements.

Stop Words #

Frequently used words like articles that are disregarded in text analysis due to their minimal informative value.

Style Transfer #

A technique merging the artistic style of one image with the content from another, producing novel visuals.

StyleGAN #

A generative adversarial network specializing in generating images with specific artistic styles.

Supervised Learning #

A learning approach where models are trained using labeled data to make predictions or classifications.

Symbolic AI #

Algorithms that process symbols that represent real-world objects, ideas, or connections between them. to process symbols that represent objects, ideas, and their interconnections within the environment.

Synthetic Data #

Artificially-generated data created to train machine learning models, often used when real data is limited.

Synthetic Media #

Any type of AI-created media content including images, videos, text, and audio, useful in creative and communications-related industries.

Synthetic Minority Over-sampling Technique (SMOTE) #

A technique for generating synthetic samples to address a class imbalance in datasets.

T-Distributed Stochastic Neighbor Embedding (t-SNE) #

A dimensionality reduction technique visualizing high-dimensional data in lower-dimensional space.

Text-to-Text Transfer Transformer (T5) #

A transformer model trained to perform various text-to-text tasks, exemplifying versatile text processing.

Text Classification #

The categorization of text into predefined classes or categories, crucial for content organization and sentiment analysis.

Text Generation #

A process where an agent produces new text-based content, applicable in chatbots, creative writing, and more.

Text Summarization #

The generation of concise summaries from lengthy text documents, aiding information extraction.

Text-to-Image Generation #

The creation of images based on textual descriptions.

Text-to-Speech Conversion #

The transformation of written text into spoken language, powering applications like voice assistants. Sometimes called “read aloud” technology.

Tokenization #

The division of text into smaller units (tokens) for analysis, typically words or subwords.

Transfer Learning #

A strategy that applies a pre-trained model to problems it’s unfamiliar with, allowing it to generalize knowledge it’s gained from a previous task.

Transformer Model #

A type of neural network that uses self-attention to learn context and tracks relationships in sequential data.

Transformer #

Neural network architecture leveraging self-attention to create general models that can be customized for desired tasks. This enables the use of transfer learning, where the pre-trained models can be used in place of large cumbersome amounts of data.

Trust Region Policy Optimization (TRPO) #

A reinforcement learning algorithm that optimizes policies while respecting policy constraints.

Trustworthy AI #

An AI system developed and used ethically, transparently, and with consideration for societal impact.

Twin Delayed DDPG (TD3) #

An advanced AI technique that improves deep reinforcement learning by integrating several cutting-edge strategies including policy gradient, actor-critics, and enhanced deep Q-learning.

Uncertainty Estimation #

The quantification of prediction uncertainty in neural network models. This is helpful because deep learning networks tend to make overly confident predictions, and wrong answers made with high confidence can cause serious problems in the real world. Also known as uncertainty quantification.

Underfitting #

A problem that happens when a model is overly simple, causing it to fail to capture underlying patterns in the data.

Uniform Manifold Approximation and Projection (UMAP) #

A dimensionality reduction technique using Riemannian geometry and algebraic topology to enable visualization of complex data in lower dimensions.

Unsupervised Image-to-Image Translation #

The transformation of images between domains without paired data, fostering creative image manipulation.

Unsupervised Learning #

The practice of training models on data without explicit target labels, allowing them to discover hidden patterns in data.

Unsupervised RL #

A reinforcement learning approach without explicit external rewards, often relying on intrinsic motivation.

VAE Disentanglement #

When a variational autoencoder learns interpretable and independent features in data representation.

Value Iteration Networks #

Neural networks employed for value iteration in reinforcement learning tasks.

Variational Autoencoder (VAEs) #

An autoencoder model that is trained to minimize reconstruction errors between the decoded data and the original data.

Vision Transformer (ViT) #

A transformer-like model that applies self-attention to image classification tasks.

Voice Synthesis #

The AI-driven generation of spoken language, pivotal for applications like text-to-speech conversion.

Voxel-Based Model #

A model that represents 3D space using small cubes called voxels, which are essentially three-dimensional pixels. This is vital for 3D scene understanding.

Wasserstein GAN #

A GAN variant built for improved stability during training.

Word Embedding #

The numerical representation of a word in natural language processing, used to capture semantic relationships between words.

Word2Vec #

A method for learning word embeddings from a large text corpus, facilitating semantic understanding.

World Model #

AI models that simulate real-world dynamics to make predictions and decisions, vital in fields like robotics, weather forecasting, and computer vision.

Zero-Shot Learning #

A technique that allows models to classify unfamiliar objects without receiving specific training for those classes. This showcases transferable knowledge and is crucial for systems intended to act autonomously.

What to Take From This AI Glossary #

We hope that this glossary has equipped you with a deeper understanding of the pivotal terms and concepts that govern the world of artificial intelligence. Understanding the complex language of AI is not just a prerequisite but a powerful lever in steering your business towards a path of innovation and efficiency, fueled by informed decisions and strategic implementations.

Machine learning, a cornerstone in the AI domain, has proliferated in recent years, giving birth to a myriad of applications that can analyze data and patterns to make predictions, optimize processes, and even foster creativity. This glossary has shed light on the various nuances of machine learning, from supervised learning to deep learning, each bringing a different set of tools and perspectives to the fore.

Furthermore, our journey encompassed the dynamic world of large language models (LLMs), diving deep into their structure, function, and immense capabilities. Understanding different LLMs equips you with the knowledge to choose the one that aligns with your business goals, ensuring a tailored approach to integrating AI into your operations.

The technical insight gained from this glossary positions you at a vantage point, allowing you to sift through the noise and hone in on the value-driven applications of AI. It encourages you to engage with AI not as a mere tool but as a collaborative partner, enhancing productivity, fostering innovation, and facilitating smart, data-driven decisions.

As you forge ahead, remember that understanding the intricate web of AI is your first step towards leveraging its expansive capabilities for your business’s success. We therefore encourage you to Install AIPRM today. This browser extension enhances the capabilities of LLMs, offering features that streamline workflow and encourage team collaboration, allowing for a more efficient, informed, and sophisticated utilization of AI tools.

Thank you for investing your time in mastering the language of AI through our glossary. We envision this resource serving as a springboard, catapulting your business into a future rich with possibilities, guided by intelligent insights, and backed by the powerful backbone of AI technologies.